FAQ & Troubleshooting

Frequently Asked Questions

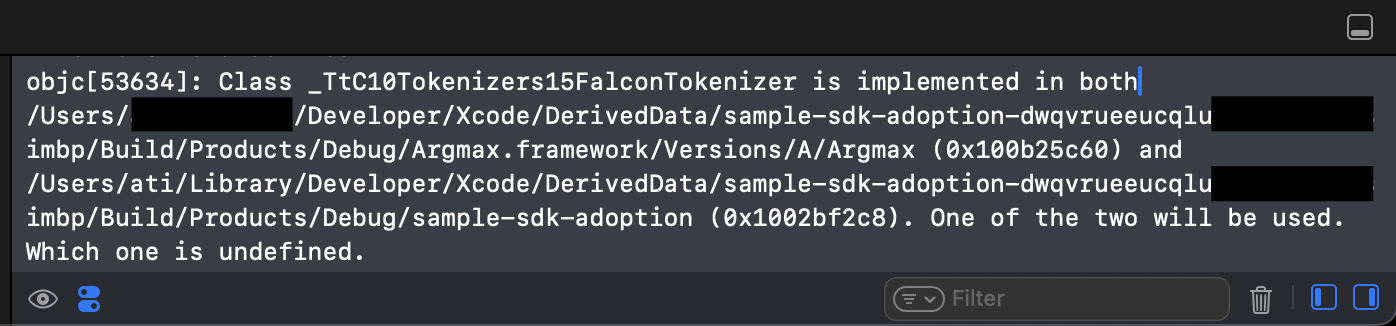

Q1: Xcode logs show objc[...]: ... is implemented in both ... and .... One of the two will be used. Which one is undefined.

This is a byproduct of Argmax Pro SDK's open-core architecture where aliased definitions (across Open-source SDK and Pro SDK) are confusing Xcode. These logs are expected and harmless. This warning will be addressed in an upcoming release with improved packaging.

In more recent versions of libobjc, the wording of this message has changed to: (still expected and harmless)

`objc[...]: ... implemented in both ... and .... This may cause spurious casting failures and mysterious crashes. One of the duplicates must be removed or renamed.

Q2: Which architectures does the Argmax SDK support?

The Argmax SDK supports macos-arm64 , ios-arm64 and ios-arm64_x86_64-simulator. If the application integrating the SDK is expected to run on other architectures, we recommend using a pre-processor directive to check for arm64, e.g.

#if arch(arm64)

import Argmax

// Initialize SDK

#endifQ3: Pro SDK initialization fails with Error: generic ("Loading this model is not allowed.")

Your Argmax Pro SDK licensing may have end-user device or SDK configuration constraints including:

- minimum operating system version

- supported devices

- specific app bundle IDs (that you reported when signing the Argmax Pro SDK License Agreement)

- specific model versions (Argmax runs checksum integrity tests on each loaded model)

Some common reasons for this error:

- You are using a different version of your app whose bundle ID was not registered with Argmax.

- You modified the model files post-download (e.g. put a README inside the model files directory) which leads to a failed checksum integrity test.

If you run into this error in a context where you believe your license should not be restricted, please reach out to Argmax via your dedicated Slack channel.

Q4: How do I set up Argmax Pro SDK in ephemeral CI runners?

Argmax Pro SDK is distributed through a private SwiftPM registry as described here. You set up the registry only once and it is persisted on your machine via Keychain.

Many teams leverage ephemeral CI runners to build their projects where the private registry needs to be set up before each build job. In these cases, we recommend using the following snippet in your environment setup:

swift package-registry set --global --scope argmaxinc https://api.argmaxinc.com/v1/sdk

swift package-registry login https://api.argmaxinc.com/v1/sdk/login --token ARGMAX_SECRET_TOKEN --netrc --no-confirm

xcodebuild -resolvePackageDependencies -packageAuthorizationProvider netrc -derivedDataPath /Volumes/workspace/DerivedDatawhere ARGMAX_SECRET_TOKEN is registered as a secret or environment variable in your CI infrastructure.

If you use an .xcworkspace that integrates multiple projects, please add -workspace to the xcodebuild command from above.

Q5: I get Unsupported Swift architecture when building my app because of Argmax

It is highly likely that your app supports a platform that Argmax does not: x86_64 (Intel Macs). Please see Q3 for the solution.

Q6: Why does my macOS app keep asking for Keychain access between builds?

Argmax uses the keychain to store offline license information for a particular device. Keychain access decisions are tied to the signing identity that created the item. Using the same bundle ids across builds signed with different identities (for example development vs production) can lead to repeated prompts that require the login password to access the Argmax license keychain entries. Use distinct bundle ids per build configuration (like com.company.myapp.dev vs com.company.myapp), and consistent signing, notarizing, and stapling to prevent this issue. Note that this is generally isolated to devices that are used for both development and release testing.

Q7: I am testing Argmax using the iOS Simulator but seeing unexpectedly low accuracy and speed. Why?

Argmax leverages the Neural Engine and running your app using the Simulator leads to major speed and accuracy discrepancies compared to real device results. We recommend using real devices to test and benchmark after Argmax is fully integrated while potentially relying on the Simulator during the earlier stages of Argmax integration.

Q8: How do I list SDK versions and view the CHANGELOG?

You may list all published versions of argmax-sdk-swift and argmax-sdk-swift-alpha with the following command in Terminal:

curl https://api.argmaxinc.com/v1/sdk/argmaxinc/argmax-sdk-swift{-alpha} -H "Authorization: Bearer SECRET_API_TOKEN" | jqThe CHANGELOG is accessible when you are logged in under this page. It is also viewable in Xcode as part of the SDK.

Q9: Why is local installation and testing different for Argmax Pro SDK Kotlin on Android?

argmax-sdk-kotlin keeps the base app small by relying on Google Play AI Pack and feature delivery for part of the runtime and model content. A regular Android Studio Run/Debug install does not go through Google Play delivery, so it does not install the AI Pack content that this package expects.

That is why local testing uses a different flow:

- For local device testing, the runtime-delivery plugin wraps the Google Play for On-device AI bundletool local testing flow.

- For Play-backed testing, you can upload the built

.aabthrough Internal App Sharing / internal testing.

Available Gradle tasks:

-

./gradlew :app:installRuntimeDeliveryDebugForDevice --device-serial <serial>Uses the debug bundle path and is intended for faster day-to-day development. It is the right choice when you want quick local iteration without release-only build behavior. If only one device is connected,--device-serialis optional. -

./gradlew :app:installRuntimeDeliveryReleaseForDevice --device-serial <serial>Uses the release bundle path and is intended for validation closer to what you ship. It runs release-specific build steps before bundletool local testing, including things like R8 and other release-only packaging behavior. If only one device is connected,--device-serialis optional. -

./gradlew :app:generateRuntimeDeliveryRegenerates the runtime-delivery project and wiring manually. This task is available even when automatic generation is enabled, but you usually do not need it in that mode because normal Gradle sync/build already regenerates the files for you. It becomes especially useful when you freeze the generated output by setting:extensions.configure<com.argmaxinc.runtimedelivery.plugin.RuntimeDeliverySettingsExtension>("runtimeDelivery") { autoGenerate = false }With

autoGenerate = false, the plugin reuses the existing generated files instead of rewriting them on every build. That makes it possible to inspect or edit the generated runtime-delivery files locally without losing those edits immediately. In that mode,generateRuntimeDeliveryis the explicit refresh command you run when you want to regenerate the output again.

If you want the simplest Android development flow with ordinary Android Studio installs, use argmax-sdk-kotlin-portable instead. If you want the smaller shipped app size of argmax-sdk-kotlin, use the runtime-delivery install tasks above for local testing or test the uploaded .aab through Google Play.

Q10: How do I verify the Argmax AI Pack and SDK bundle sizes on Google Play after upload?

After you upload your argmax-sdk-kotlin-integrated .aab to a Google Play track (Internal testing is the quickest), use the On-device AI (beta) and App bundles sections of the Play Console to confirm the AI Pack is published and to inspect what each end-user device will actually download.

1. Open the On-device AI (beta) section. From the left navigation in Play Console, go to Test and release → On-device AI (beta).

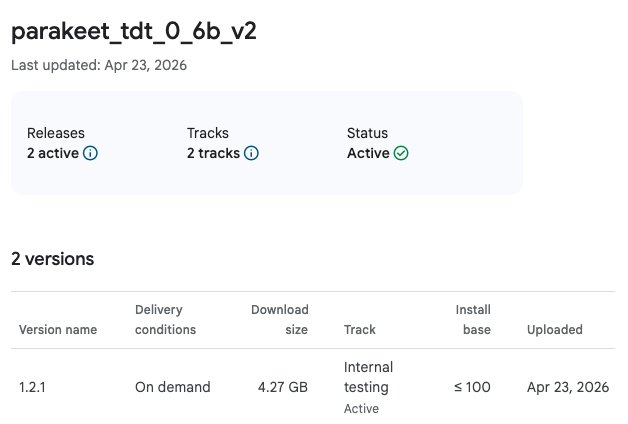

2. Verify the AI Pack (parakeet_tdt_0_6b_v2) is listed. This is the model bundle that Argmax Pro SDK Kotlin downloads on demand. If the upload was processed correctly, the pack appears with an Active status and one or more versions associated with the tracks you published to.

The 4.27 GB download size shown here is the combined size of every model variant Argmax ships across all supported NPU/SoC targets. An end-user device only downloads the single variant matching its hardware, which is typically around 600 MB per device. This pack is delivered separately from your main app via Google Play's on-device AI delivery channel.

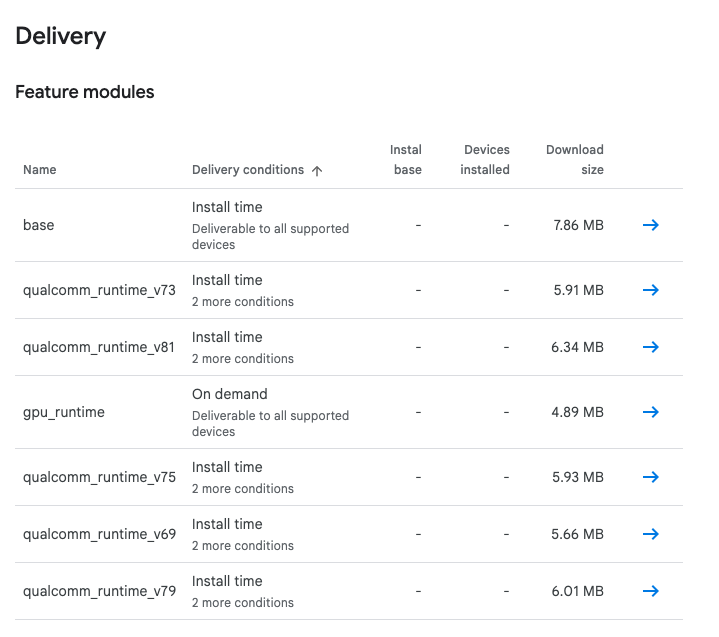

3. Inspect the app bundle to see base + per-hardware split sizes. From Test and release → App bundles, open the bundle you uploaded and click Details → Delivery to see how the runtime is split per device class. The base module is what every install gets, and one qualcomm_runtime_* feature module (or gpu_runtime, on demand) is delivered alongside it depending on the device's NPU support.

In this example, the base module is 7.86 MB, which is the total install-time payload (host app code, resources, the SDK's base content, and any other shared assets — the SDK is only one contributor). On top of that, each device pulls one 5–7 MB runtime split matching its hardware (or the 4.89 MB gpu_runtime on demand for devices without supported NPU), and the AI Pack downloads post-install. If the AI Pack does not appear under On-device AI (beta), or the feature modules list does not include the runtime modules you expect, re-check that you are using argmax-sdk-kotlin (not argmax-sdk-kotlin-portable) and that the runtime-delivery plugin produced the AI Pack and feature module sources at build time.